Overcoming Behavioral & Information Gaps (OBIG)

is an independent research project that studies behavioral and informational vulnerabilities, the ways they are exploited in everyday and digital environments, and develops practical solutions to enhance awareness, information discernment, and security.

Human and Humanity

We live in an era of rapidly accelerating change in technology and other spheres. Some people are frightened by these changes, while others feel inspired and energized by them. There are those who constantly engage with and learn new things, and those who prefer not to delve too deeply into the new. Both perspectives are understandable, as keeping pace with such rapid shifts is indeed challenging.However, there are essentials that remain unchanged in their nature—humanity itself. Amidst this stream of change, it is vital not to abandon our humanity, integrity, kindness, and friendship. It is our humanity that distinguishes us from machines and algorithms: the capacity for genuine love, empathy, and accountability for our actions. In this context, we must remember that behind every action, including digital ones, stand real people with real lives and destinies.

Technology

Technology offers humanity greater opportunities by providing more free time. There was a time when people washed clothes by hand; today, washing machines and dishwashers are commonplace. Robots are now used for cleaning homes, mowing lawns, manufacturing, and business operations. This frees up time for more meaningful pursuits, such as face-to-face communication with loved ones, creativity, and resolving their psychological issues.We connect with family via video calls, access safer technology, discover treatments for previously incurable diseases, cultivate human organs for transplantation, and much more. Technology also has a negative side. Within the scope of this project, I will not address all these topics. Here, I focus on researching the various vulnerabilities that fraudsters exploit in their criminal activities.

Cybercrime

In 2025–2026, cybercrime is no longer limited to individual "hackers." It has evolved into an established shadow digital economy with its own infrastructure, marketplaces, and even elements of "franchising"—where criminal operations are replicated and scaled like business franchises, a model supported by evidence of Ransomware-as-a-Service (RaaS) networks [Source: FutureCISO, Cybercrime Magazine 2025/2026 reports]—causing trillions of euros in annual damage.Among cybersecurity experts, the phenomenon of APT groups (Advanced Persistent Threats) is widely discussed. These are organized hacker collectives often linked to state interests. Their activities can lead not only to economic losses but also to severe consequences, including the undermining of democratic processes, disruption of critical infrastructure, and threats to human life and health.While the cybersecurity industry constantly evolves, malicious actors actively leverage new technological tools to increase the effectiveness and scale of their attacks. Currently, a growing number of fraudulent attacks involve attackers manipulating AI systems. Users and companies, seeking to simplify daily tasks or reduce costs, increasingly delegate sensitive operations involving finances, confidential data, health, and safety to these systems. Under these conditions, attackers often only need to exploit existing vulnerabilities, without the need for complex technical intrusion.

Social Engineering Attack

A Social Engineering Attack (SEA) is the deliberate manipulation of human behavior based on the abuse of psychological and/or informational vulnerabilities. The attacker employs deception, impersonation (pretending to be someone else), or exploits circumstances to compel the victim to take actions leading to fraud or other unlawful consequences.International cybersecurity organizations, including ENISA, emphasize that human behavior remains one of the key challenges in information security. According to the Verizon Data Breach Investigations Report (DBIR), "the human element is involved in the majority of breaches" [Source: Verizon DBIR].In practice, an attacker often acts as a "chameleon," impersonating a trusted figure—a relative, colleague, security officer, or government official—to prompt a person, driven by fear or urgency, to take or refrain from taking specific actions. While methods vary, this pattern is the most common.The most prevalent types of such attacks include:

- Phishing: Fraudulent emails

- Smishing: Fraudulent SMS messages

- Vishing: Fraudulent phone calls

Public awareness campaigns by the National Cyber Security Centre (NCSC) and Garda Síochána recommend a simple principle: "Think before you click"—do not rush, and always verify information [Source: NCSC Ireland].These attacks target both regular users and employees of companies and government organizations.In my view, every person has the right to a safe internet—for themselves and their children. It is crucial to maintain a balance: not to see a threat in everyone, but to remain informed, aware of risks, and attentive to one's actions in the digital environment.

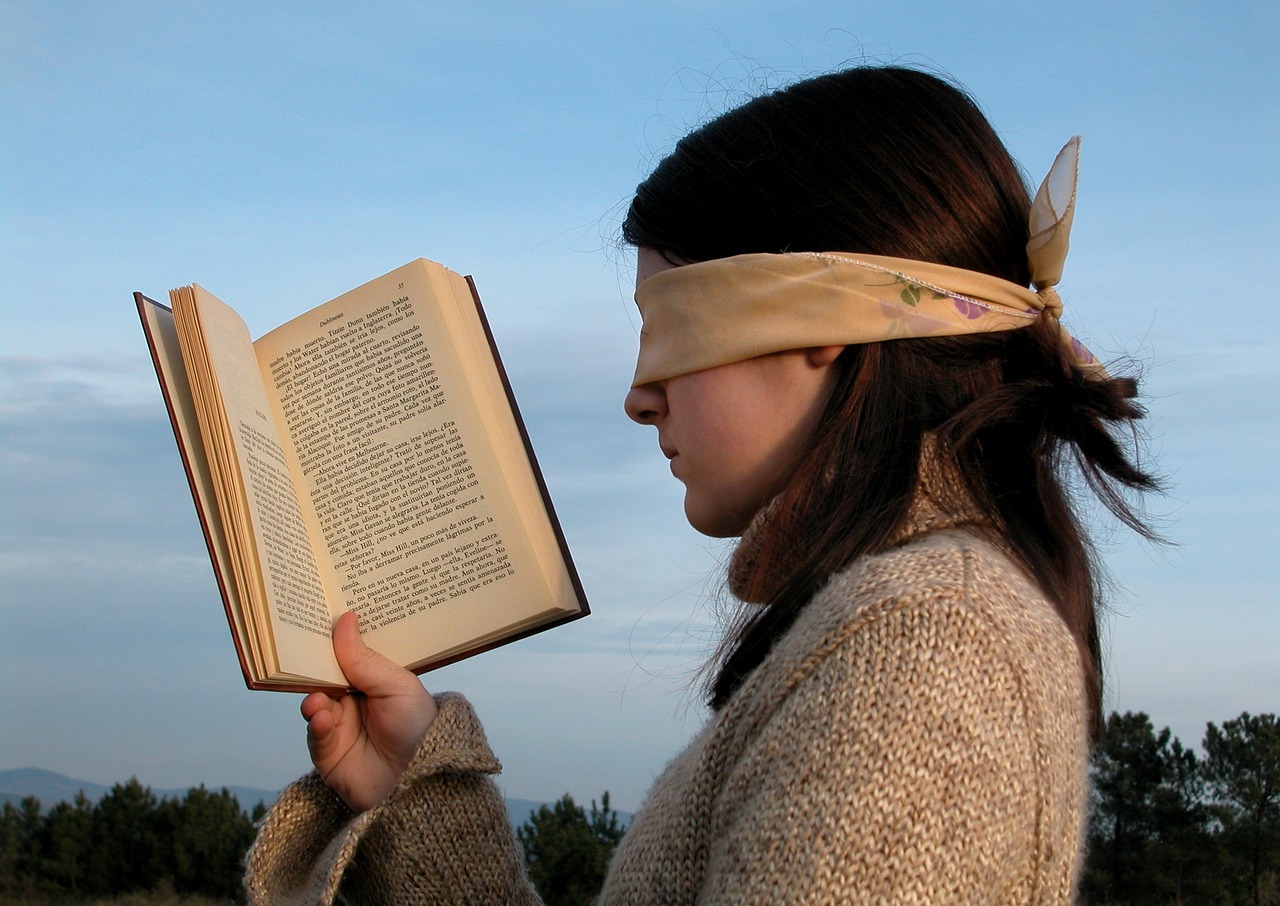

Media Literacy

Fakes and deepfakes have become key tools in the arsenal of fraudsters of all scales.As Herbert A. Simon noted in Administrative Behavior (1947): "Decision-making is bounded by limitations of attention, information, and time" [Source: Simon, H. A. (1947). Administrative Behavior. Macmillan].As Daniel Kahneman demonstrates: "A reliable way to make people believe in falsehoods is frequent repetition, because familiarity is not easily distinguished from truth" [Source: Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux].It is evident that everyone has the right to timely and accurate, trustworthy information. However, in today's digital environment, this right is increasingly accompanied by responsibility: to think critically, verify sources, analyze context, and approach decision-making consciously.In practice, this boils down to simple steps: pause, calm down, and do not react immediately; listen to your intuition; think, and then verify the information before trusting it and/or sharing it.

- Researching behavioral and informational vulnerabilities to prevent social engineering attacks in both everyday life and the digital environment;

- Studying vulnerabilities in AI products through their development and ethical hacking;

- Investigating GRC (Governance, Risk, and Compliance);

- Exploring human rights, justice, and jurisprudence in general, including in the context of emerging technologies and new social realities;

- Analyzing media literacy and fact-checking practices as key tools for countering disinformation and manipulation;

- Implementing research findings to create applied preventive tools for building more resilient communities.

Unity

As noted by the National Cyber Security Centre (NCSC) Ireland: "Every citizen has a role to play in securing our digital environment" [Source: NCSC Ireland].Whether we realize it or not, the security of a family or community largely depends on the awareness and digital hygiene of each member. The compromise of a single device or account can increase risks for everyone.Governments and businesses must recognize that there are no "insignificant" users in the digital environment. Many people still consider themselves too uninteresting for attackers; however, in practice, such users often become entry points—for example, through linked accounts, work contacts, or close social circles. Furthermore, less protected users are frequently targeted for testing attacks and training aspiring cybercriminals.As Bruce Schneier states: "Security is a people problem" [Source: Schneier, B. (2015). "Security is a people problem." Schneier.com]. He also emphasizes that "Good security starts with good communication and trust among users."

Phase 1

Foundations & Accessibility

A) Interactive Threat Simulator (Tabletop Edition): Users immerse themselves in realistic scenarios that hackers actually use to launch social engineering attacks. In a safe environment, free from tests and grading, they are presented with alternative cause-and-effect pathways. This allows them to gain awareness of such attacks and explore balanced protective responses. The goal is not to test knowledge, but to safely experience risk and identify effective solutions.

B) Children's Books and Youth Simulator Development: Materials to offer a culture of security from an early age.

C) Research Findings Dissemination: Publishing and sharing research data through multiple communication channels.

Phase 2

Implementation & Specialization

A) Facilitated Workshops: Group sessions led by professional facilitators to practice skills within a team.

B) Vulnerability Testing in AI Assistants and Bots: Analysis and protection of artificial intelligence against manipulation and injection attacks.

Phase 3

Scaling & Strategy

A) Digital Versions of the Interactive Threat Simulator: Online platform for mass access and automated training.

B) Additional Technical Products: Tools for integration into existing IT ecosystems.

C) GRC Consulting and Secure Architecture Design: Strategic risk management and security system architecture design.

Support

The project is being developed as a commercial initiative; however, sales have not yet launched. If the mission of OBIG Laboratory resonates with you and you wish to contribute to its growth, you can support the project financially:

- One-time donation — a single contribution.

- Recurring support — monthly subscription.

Your contribution will help us continue our research, develop tools, and make the world a little safer.